After you have defined your assistant and configured one or more tasks, you should test your settings before you publish your NLP-enabled assistant. Bot owners and developers can chat with the assistant in real-time to test recognition, performance, and flow as if it were a live session.

Testing a Virtual Assistant

To test your tasks in a messaging window, click the Talk to Bot icon located on the lower right corner on the XO Platform.

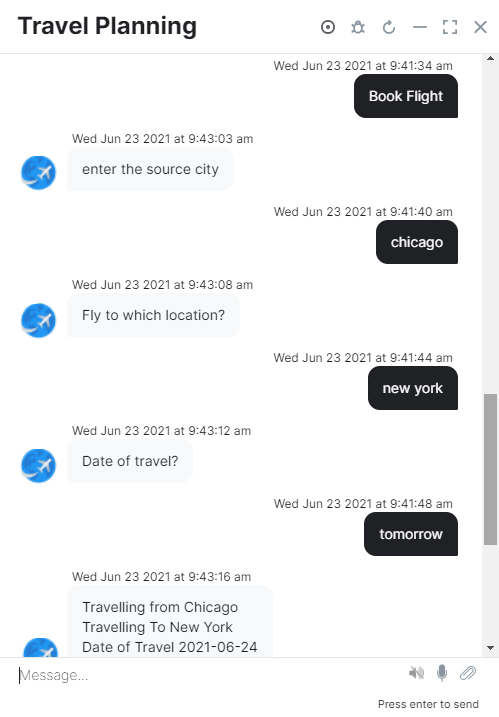

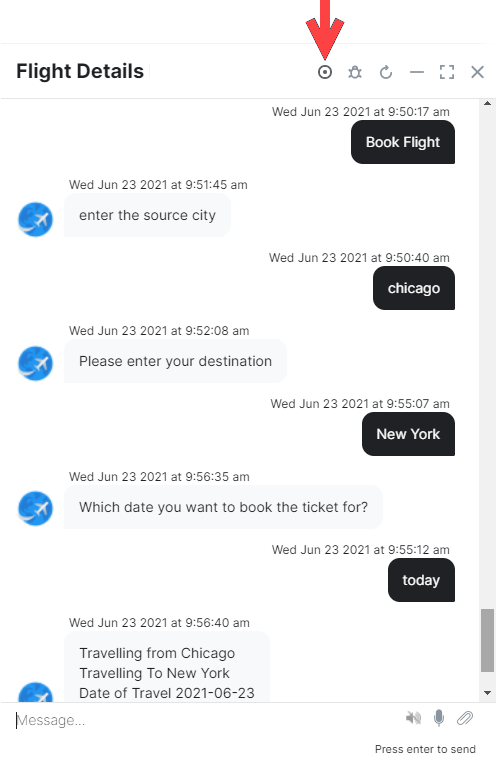

A messaging window for the assistant is displayed and connected to the NLP interpreter as shown in the following illustration for a Flight Details Assistant .

When you first open the window, the Bot Setup Confirmation Message field definition for the assistant is displayed, if defined. In the Message section, enter text to begin interacting and testing your assistant , for example, Book a flight. The NLP interpreter begins processing the task, verifying authentication with the user and the web service, and then prompting for required task field information. When all the required task fields are collected, it executes the task. While testing your assistant , try different variations of user prompts and ensure the NLP interpreter is processing the synonyms (or lack of synonyms) properly. If the assistant returns unexpected results, consider adding or modifying synonyms for your tasks and task field names as required. For more information, see Natural Language Processing.

Debugging and Troubleshooting

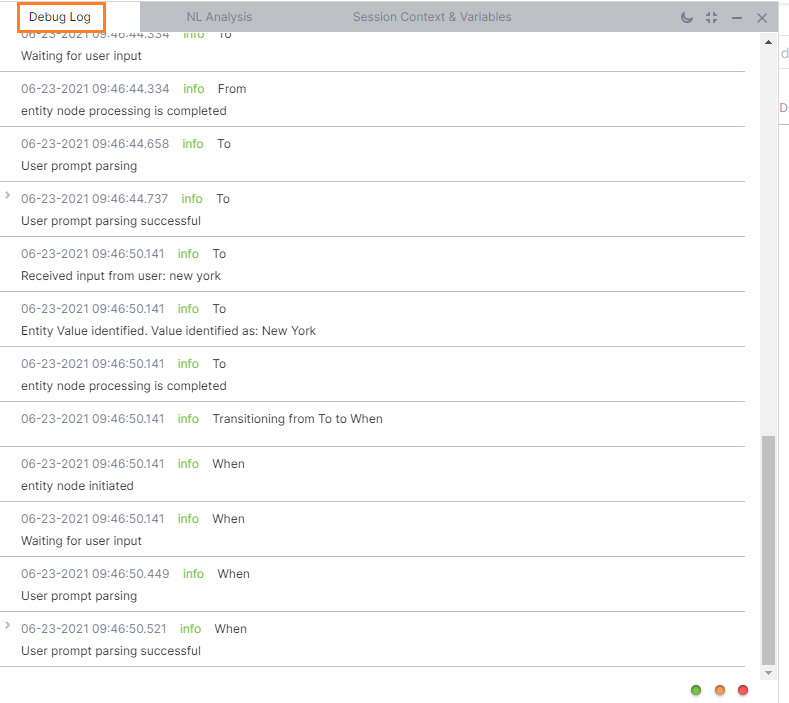

You can open a debug window to view the natural language processing, logs, and session context, and variables of the chat. To open the debug, click the Debug icon ![]() located on the top right-hand side of the Talk to Bot chat window. The Debug window consists of the following tabs: Debug Log, NL Analysis, Session Context & Variables.

located on the top right-hand side of the Talk to Bot chat window. The Debug window consists of the following tabs: Debug Log, NL Analysis, Session Context & Variables.

The Debug window lets you explore the following:

- NL Analysis: Describes the tasks loading status, and for each utterance presents a task name analysis and recognition scores.

- Debug Log: Lists the processing or processed Dialog task components, which include Script Node, Service Node, and Webhook Node logs along with a date timestamp.

- Session Context & Variables: Shows both context object and session variables used in the dialog task processing.

Debug Log

Debug Log provides the sequential progression of a dialog task and context and session variables captured at every node. The Debug log supports the following statuses:

- initiated: The XO Platform initiates the various nodes in a dialog task. For example, script, service, and webhook execution is initiated.

- execution: indicates execution of nodes has started. For example, script, service, and webhook execution has started.

- execution successful: indicates execution of nodes is successful.For example, script, service, and webhook execution is successful.

- process completed: indicates execution process for the script, service and webhook node is completed.

- expand: You can expand the node and click the Show More to view node debug log details.

- node details: shows the node details in the script format. You can copy, open the script in a full screen view or close the script view.

- parsing: The XO Platform begins to parse the user prompt.

- parsing successful: the user prompt is parsed successfully.

- waitingForUserInput: The user was prompted for input

- pause: The current dialog task is paused while another task is started.

- resume: The current dialog with paused status continues at the same point in the flow after completion of another task that was started.

- waitingForServerResponse: The server request is pending an asynchronous response.

- error: An error occurred, for example, the loop limit is reached, a server or script node execution fails.

- end: The dialog reached the end of the dialog flow.

NL Analysis

NL Analysis tab shows the task name analysis and recognition scores of each user utterance. It presents a detailed tone analysis, intent detection, and entity detection performed by the Kore.ai NLP engine. As a part of intent detection, the NL Analysis tab shows the outcomes of Machine Learning, Fundamental Meaning, and Knowledge Graph engines. For a detailed discussion on the scores, see Training Your Assistant topic.

NER information in the conversational context is available on this tab. This information can be used for causes like node transition.

Note: This is available only in Standard Bots.

Session Context and Variables

The Session Context & Variables tab displays dynamically the populated Context object and session variables updated at each processed component in Dialog Builder. The following is an example of the Session & Context Variables panel in Debug Log. For more information about the parameters, see Using Session and Context Variables in Tasks and Context Object.

The same NER information, that is visible in the NL Analysis tab, can be seen in this tab too under Custom Variables.The platform shows below details in the nerInfo object:

- componentId

- entityNERConfidenceScore

- entityName

- Value

Note that this feature is available only for Standard bots.

System Commands

System Commands allow you to take control of the user-bot conversation during evaluation. These can also be injected into the assistant using JavaScript code. See here for more.

Record Session

Record option allows you to record conversations to help in regression testing scenarios.

While your recording is in progress, you can see a notification at the top of the chat window. Here is where you will also find a STOP option, which you can click on whenever you want to stop recording.

Once you stop the recording, you can use the recorded conversation to create a test case, for further conversation testing. Learn more.

After clicking on Create Test Case, you can alternatively download the recorded content as a JSON file.

This is what a short conversation with a Kore.ai assistant might look like: