A test case assertion is a statement specifying a condition that must be satisfied for a test case to be considered successful. In the context of conversational systems, test case assertions can be used to validate various aspects of the conversation, such as the correctness of the response to a user’s input, the correctness of the conversation flow, and the correctness of the conversation context. By including multiple assertions in a test case, you can thoroughly test the behavior of a conversational system and ensure that it is functioning as expected.

Types of Assertions

This section describes the types of assertions available for different intent types.

Dialog Node Assertions

If the virtual assistant’s response is from a Dialog node, then the following assertions are available:

-

- Flow Assertion: A Flow assertion tests the flow of the path traversed by every user input during a conversation to check whether the responses are provided from the correct node or not. It helps to detect and handle any deviations from the expected flow.

- It refers to the tasks, nodes, FAQs, or standard responses that were triggered during the flow execution. This ensures that the bot has traversed the same path as expected.

- It is enabled by default. You can disable it if required.

- This section shows the summary on the right side of the Test Suite panel:

- Flow Assertion: A Flow assertion tests the flow of the path traversed by every user input during a conversation to check whether the responses are provided from the correct node or not. It helps to detect and handle any deviations from the expected flow.

— Expected Node

— Expected Intent

— Transitions

-

- Text Assertion: A Text assertion tests the content presented to the user (string-to-string match), and provides support for dynamic values. Text assertions compare the text of the expected output with the actual output.

- It is enabled by default. You can disable it if required.

- This section shows the summary on the right side of the Test Suite panel:

- Text Assertion: A Text assertion tests the content presented to the user (string-to-string match), and provides support for dynamic values. Text assertions compare the text of the expected output with the actual output.

—Expected Response – Contains all possible responses/variations with the annotated dynamic values.

| Note: In case of text assertion, if the expected output has dynamic values, then it should be annotated using Dynamic Text Marking. If not marked, the text assertion fails and eventually leads to failure of the test case. For the test case to pass, the text for that specific output must be dynamically marked. |

For example, in the following test case, the city name entered by the user can be different every time. It is marked as dynamic for that specific test case to pass.

The test case and text assertion can be seen as passed in the Result Summary. If the text is not marked as dynamic, the test case would fail.

-

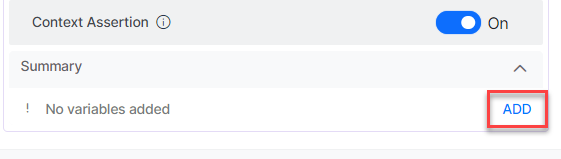

- Context Assertion: A context assertion can be used to test the presence of specific context variables during a conversation. By using a context assertion, you can verify that the correct context variables are present at a specific point in the conversation, which can be helpful for ensuring the smooth and successful execution of the conversation flow.

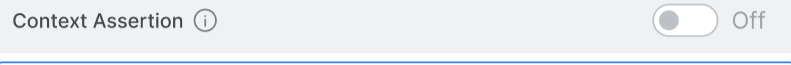

- It is disabled by default. You can enable it if required.

- Context Assertion: A context assertion can be used to test the presence of specific context variables during a conversation. By using a context assertion, you can verify that the correct context variables are present at a specific point in the conversation, which can be helpful for ensuring the smooth and successful execution of the conversation flow.

-

-

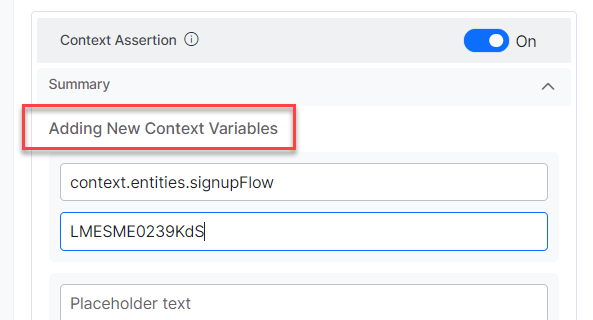

- Once enabled, you can click the Add button to add key-value pairs of context variables.

-

-

-

- This section shows a summary of added variables:

-

–Key

–Value

| Note: The Context assertion label is added or removed to the test case in a validated chat based on whether it is enabled or disabled. As you add one pair, text boxes to add another pair get displayed. Once added, the saved key-value pairs are retained even if the assertion is disabled. If the VA response is from a FAQ or Small Talk or Standard Response, the Flow assertion has only Expected Node. The behavior of Text and Context assertions is the same as how it is for Dialog intent. |

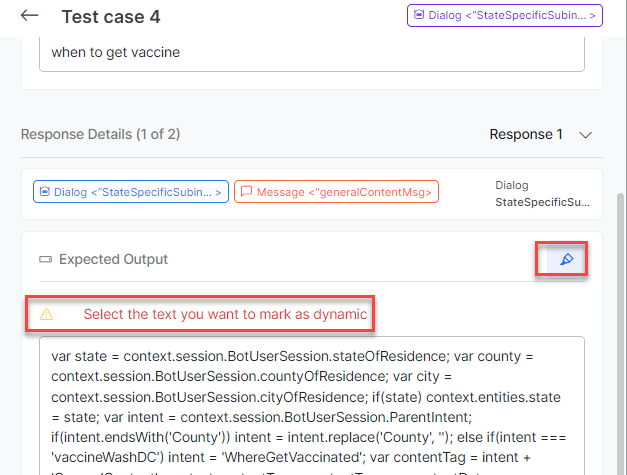

Dynamic Text Marking

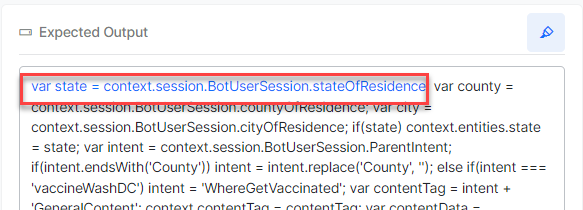

The dynamic text annotation feature in the Expected Output allows you to annotate a section of the text. You can add one or more annotations for a VA response.

During test execution, the annotated portion of the text is ignored by the platform for text assertion. You can view all the added annotations of VA’s responses and be able to remove them.

| Note: Even if the value of the specific marked text is different, the test cases are marked as a success during execution. This feature is handy when different values are expected every time you interact with the bot. |

The following steps explain the dynamic text marking with an example:

-

- Click any Test Suite on the Conversation Testing page.

- In the Test Editor tab, navigate to the Test Suite Details Panel.

- In the Test Suite Details panel, select a text in the Expected Output for a test case and click the Dynamic Marker icon (see the following screenshots).

| Note: The selected state variable in the Expected Output is annotated and highlighted in blue. One or multiple words can be marked as dynamic text. |

-

- You can again click the Dynamic Marker icon to deselect the text and remove the dynamic marking.

- Click the Dynamic Marker icon next to the list to expand and see the list of dynamic values.

Test Coverage

The Test Coverage captures details like how many transitions or Intents are covered for this test suite. It helps add more test cases to cover the missed intents and transitions.

This section explains how to access the Test Coverage and its details.

On the Conversation Testing landing page, in the Test Suite Details grid, click any Test Suite and then click the Test Coverage tab. Test Coverage contains the following three sub-tabs:

Dialog Intents

This page provides transition coverage information and details of transitions across the Dialog intents.

Transition Coverage

The following details are displayed in the Transition Coverage section:

- Total Transitions – Total number of unique transitions available in the VA definition across all the intents.

- Covered – Count and percentage of unique transitions covered as part of the Test Case definition against the Total Transitions list.

- Not Covered – Count and percentage of unique transitions not covered as part of the Test Case definition against the Total Transitions list.

For example, the VA has total 292 transitions, out of which the coverage is as follows:

- Covered – 15 Transitions and 5.14%

- Not Covered – 277 Transitions and 94.86%

| Note: If you delete the node transition in a particular intent, then such transitions are displayed as Not Valid in the Coverage Status column. |

Intent Coverage

All the intents and their transition coverage details are displayed in this section.

The following details are displayed in the grid:

- Intent Name

- Coverage Status

- Total Transitions

- Transition Covered

- Transition Coverage (%)

You can obtain Node transition details of an intent by clicking the View Transitions slider. The Node Transitions pop-up displays the following details:

- From Node

- To Node

- Coverage Status

Note: A transition must have one of the following three values displayed in the Coverage Status column:

|

You can sort, search, and filter the data for all the columns in the Intent coverage grid.

FAQs

In the FAQs tab of Test Coverage, all the unique FAQ names covered in this test suite are displayed.

Small Talk

All the Small Talks covered in the test cases are displayed in this tab in two columns:

- Pattern

- Group

To know more about Patterns and Groups, see Small Talk.

| Note: You can filter and sort the details displayed in the Small Talks grid. |

On the main page, the Test Suite is marked as Passed only when all the test cases are passed; else, it is marked as Failed.